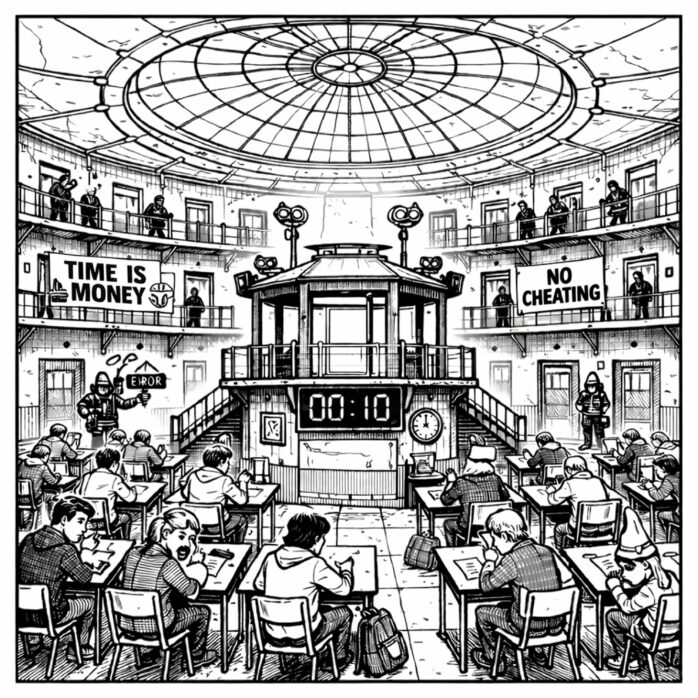

The last few months have seen my campus scrambling to get back to in-person assessment and to reopen testing centers. Like many universities that quietly had deemphasized such exams during the COVID years, now at UC Irvine there is rising faculty demand to quickly change course. Many worry about the validity of take-home and online assessments, as campus officials search for rooms or even build new ones. Meanwhile, already stressed students feel increasingly desperate over high-stakes tests that can make or break academic success. While the crisis seems recent at UCI, what’s really happening predates the rise of generative AI and won’t be fixed with more exam rooms.

Much of higher education now sees online assessment as an arms race it can’t win, with over 150 institutions planning to end it this year. Earlier this month, the Law School Admission Council (LSAC) announced t it would return the LSAT to in-person testing by summer 2026, citing “security concerns,” “score inflation,” and “the misuse of technology to facilitate cheating.”[1] All Ivy League schools also are reverting to standardized tests for admissions after eliminating them during the last decade. Complicating matters further is the reality of cash-strapped schools facing infrastructure bottlenecks because they’ve repurposed or sold off testing centers.[2] Driving this frantic backtracking is the logical but incorrect belief that assessment is losing meaning at a time when ChatGPT can generate answers in a few seconds. Hence the current retreat to blue books, testing rooms, and internet-free conditions.

“Generative AI did not create assessment issues. It revealed them,” according to Emma Ransome of Birmingham City University.[3] Ransome explains that traditional measures like timed exams, standardized tasks, and recall-based tests historically have done poorly in evaluating skills universities claim to instill such as critical thinking, ethical judgement, and synthesizing ideas. Generative AI has made the disconnect between what is being measured and what is being taught even more apparent.

Continue reading “The Assessment Crisis is Bigger than AI”